On December 28th and 30th, 2025, HomeworkSMP experienced two of its longest outages ever since the start of this SMP and this World. The total downtime across both outages reached 19 hours and 40 minutes, and the server was fully restored on January 1st, 2026 at 16:33 (all times in this post are in PST/UTC-08:00). The primary cause of the incident was infrastructure issues, with maintenance debt and human error attributing to prolonging the outage. We would like to sincerely apologize for the significant disruption over these four days. This outage was preventable, and moving forward we will be working towards reducing the downtime of the server and preventing similar incidents.

Root cause of the Outage

The outage was caused by a combination of infrastructure misconfiguration, operating system limitations, and deferred maintenance, which together resulted in a prolonged and complex recovery.

The server was hosted on Windows, an environment that proved unsuitable for long-term, headless server operation. On December 28, a failure within Windows Firewall began silently dropping inbound and outbound traffic, preventing the server from communicating reliably with external services, including Minecraft authentication servers, and any server joins. This led to connection failures and intermittent outages before the server was taken offline.

The impact of this failure was significantly worsened by overdue maintenance. Plans to migrate the server to Linux had been postponed multiple times, leaving the system in a fragile state. As a result, once the firewall issue occurred, recovery required a full operating system migration rather than a targeted fix.

Recovery was further delayed by procedural and hardware-related issues, including:

- Limited physical access and reliance on RDP for a headless system

- Display and installation issues during OS deployment

- Disk permission errors during reinstallation attempts

- Hardware failure during recovery operations

In summary, the root cause was an unsuitable operating system and insufficient preventive maintenance, with human and procedural errors contributing to the duration of the outage.

The Incident

Day 1: Initial Failure and First Outage (Dec 28, 2025)

Early Symptoms

Issues began in the morning of December 28, when players reported intermittent failures connecting to the server. Common errors included “Network is unreachable,” “Connection timed out,” and authentication-related failures.

Image credit: 277V/@ethernet0

Image credit: 277V/@ethernet0

By early afternoon, these issues became more frequent. At 16:20, it was confirmed that no players were able to join the server.

Escalation to Full Outage

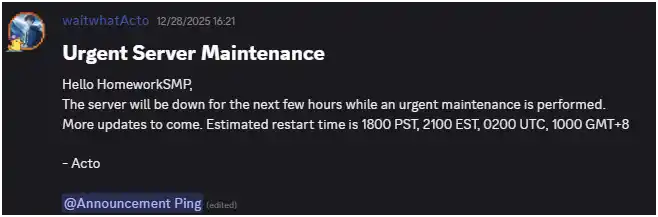

Although the outage was officially announced at 16:21, the server had already experienced intermittent failures earlier in the day. Logs indicate the first confirmed authentication failure occurred at 13:10, consistent with loss of external connectivity.

By 15:17, the server was effectively offline, though it was not formally taken down for maintenance until 16:23. At that point, the incident was escalated to an urgent maintenance.

Actions Taken

Initial troubleshooting focused on restoring network connectivity and external service access. Due to the server’s headless configuration and reliance on RDP, access was limited. Tailscale was used to regain access.

After approximately 35 minutes of unsuccessful recovery attempts, it was determined that recovery would require a full operating system change rather than a live fix. At this point, the decision was made to migrate the server to Linux as part of the recovery process.

Partial Restoration and Degraded State

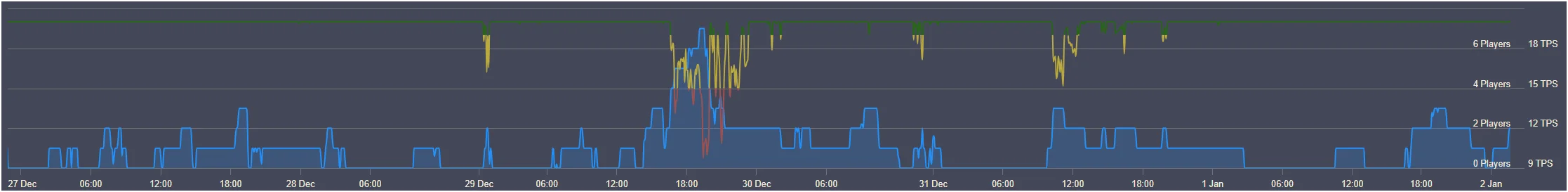

The server was brought back online at 00:04 on December 29. While connectivity was restored, performance was severely degraded. Average MSPT exceeded normal levels, and TPS frequently dropped below acceptable thresholds.

After several configuration attempts, it became clear that further recovery work would be required. At 01:53, a second maintenance window was announced for December 30.

Timeline of Day 1

| Time (in PST/UTC-08:00) | Server Status | Description |

|---|---|---|

| 10:00 12/28/2025 | Normal | No errors or recorded outage. |

| 12:59 | Degraded | Alert raised. |

| 13:05 | Degraded | Server fix attempted. |

| 13:10 | Degraded | First recorded outage, player joins failed. |

| 13:31 | Impacted | Server reported as offline. |

| 14:42 | Degraded | Second server fix attempted. |

| 14:46 | Normal | No errors or recorded outage. |

| 15:17 | Impacted | Server reported as offline. |

| 16:21 | Impacted | Outage announced. |

| 16:23 | Offline | Server taken offline in preparation for recovery. |

| 17:55 | Offline | Proxmox installation 1 began. |

| 18:10 | Offline | Proxmox installation 1 completed. |

| 20:05 | Offline | Server preparation began. |

| 22:05 | Offline | Issue encountered in server preparation. |

| 22:29 | Offline | Proxmox installation 2 began. |

| 22:57 | Offline | Proxmox installation 2 completed. Server preparation began. |

| 00:04 12/29/2025 | Degraded | Server online. Intermittent outages began. |

| 00:48 | Degraded | Intermittent outages ended. End of outage. |

| 01:53 | Degraded | Planned outage on December 30th announced. |

Between Outages (Dec 29, 2025)

Following partial restoration on December 29, the server performance was closely being monitored. Under a 7 player load, the server performance reached historic low levels, bottoming at 9 TPS at worst, averaging at around 17 TPS. To address these, a planned maintenance window was scheduled for December 30 to migrate the server to Ubuntu.

Day 2: Planned Recovery Outage (Dec 30, 2025)

Outage Initiation and Backups

Due to persistent performance issues under Proxmox, the decision was made to migrate the server to Ubuntu, which had previously proven stable. The scheduled outage began at 12:00, with backups starting at 12:38 and completing at 13:20. The server was taken offline at 13:22, and proper backup procedures ensured no data was lost. The server was powered off at 14:59.

Installation Attempts and Hardware Challenges

The Ubuntu installation encountered multiple obstacles. Initial attempts failed due to disk permission errors, requiring a full disk wipe before the installation could proceed. Installation attempts were made at 15:05, 15:13, and 15:21, all unsuccessful. After resolving the permission issues at 16:17, a fourth installation was attempted at 16:28.

At 16:29, the motherboard used for the installation failed during hardware handling while powered, halting progress. A replacement motherboard was acquired by 17:19, and operations resumed at 18:47. The fifth installation, beginning at 18:55, completed successfully.

Restoration and Degraded Performance

Server configuration and performance tuning began at 19:22. The server returned online at 21:44, with intermittent performance issues continuing until 23:12 due to final configuration adjustments and reboots. After this, the server stabilized in a degraded state, with full recovery achieved on January 1, 2026.

Timeline of Day 2

| Time (in PST/UTC-08:00) | Server Status | Description |

|---|---|---|

| 00:48 12/29/2025 | Degraded | End of first outage. |

| 12:00 12/30/2025 | Degraded | Scheduled outage began. |

| 12:38 | Degraded | Backups began. |

| 13:20 | Degraded | Backups completed. |

| 13:22 | Offline | Server taken offline. |

| 14:59 | Offline | Server powered off. |

| 15:05 | Offline | Ubuntu installation attempt 1 began. |

| 15:13 | Offline | Ubuntu installation attempt 2 began. |

| 15:21 | Offline | Ubuntu installation attempt 3 began. |

| 16:05 | Offline | Issue identified: Disk I/O. |

| 16:14 | Offline | Disk I/O issue resolved. |

| 16:17 | Offline | Ubuntu installation attempt 4 began. |

| 16:29 | Offline | Motherboard failure. Operations paused. |

| 17:19 | Offline | Replacement motherboard obtained. |

| 18:47 | Offline | Operations resumed. |

| 18:55 | Offline | Ubuntu installation attempt 5 began. |

| 19:22 | Offline | Ubuntu installation completed. Server configuration began. |

| 21:44 | Degraded | Server online. |

| 21:59 – 23:12 | Degraded / Impacted | Intermittent outages for performance tweaks. |

| 23:15 | Degraded | Intermittent outages ended. End of outage. |

Degraded Performance after the Outages

Even after the server came back online following the second outage, performance remained degraded. Average TPS stabilized around 17 (50%) while MSPT remained above optimal levels, resulting in noticeable lag during gameplay.

We closely monitored the system to identify the cause. On January 1, 2026, it was discovered that performance was being throttled because the server’s battery had been removed during the first outage to reduce operational interruptions and had not been reinstalled. Once the battery was replaced at 16:33, the server returned to full performance, with TPS and MSPT within normal operating thresholds.

We’d like to sincerely thank 277V/@ethernet0 for their support throughout this outage, providing guidance on technical challenges and helping us navigate the recovery process. Their help made a significant difference in how we were able to restore the server.

What we learned

These outages emphasized the importance of proactive maintenance and infrastructure planning. Windows proved to be an unsuitable long-term environment for a headless Minecraft server, and deferring the planned migration to Linux left the system fragile and vulnerable to extended downtime. We also learned that relying solely on RDP for administrative access is insufficient for a headless server setup, and that simultaneous hardware, OS, and disk-level issues can compound recovery efforts. Even seemingly minor oversights, such as removing the battery, can have a significant impact on performance. The experience reinforced the value of robust backups, continuous monitoring, and thorough documentation to prevent data loss and maintain uptime.

How we are preventing a reoccurrence

Moving forward, HomeworkSMP has implemented a series of measures to prevent similar incidents. The server has been fully migrated to Ubuntu Linux with a tested and repeatable deployment procedure. Recovery procedures have been updated to account for hardware failures, installation challenges, and other simultaneous failures. Redundant administrative access methods have been established, including Tailscale, Cloudflared, SSH, and direct console access. In addition, continuous monitoring of TPS and MSPT with automated alerts will allow the team to respond quickly to performance degradation. Finally, all procedures, lessons learned, and best practices are now documented comprehensively to guide future maintenance and onboarding.